3 Common MongoDB Mistakes Developers Make (And How to Fix Them)

Common MongoDB problems usually start with SQL thinking. Learn how embedding, duplication, and query awareness lead to better schema design.

A guide to thinking in documents, not tables.

I've seen a lot of MongoDB projects over the years. Some were successes. Some were disasters that ended in painful migrations. The difference between them had nothing to do with the scale of data, the complexity of the domain, or whether MongoDB was "the right choice."

The issue: the different mentality between thinking in SQL and thinking in MongoDB.

This Isn't About Which Database Is "Better"

This article isn't here to tell you MongoDB is universally superior to SQL. That debate is meaningless. The real question is: which one fits your situation?

Think of your data as cargo you need to transport. SQL is like a sports car—you make fast, precise trips back and forth to fetch exactly what you need from different locations. MongoDB is like a moving truck—you pack everything from one place and ship it all to the destination in a single trip.

SQL shines when:

- Your data has complex, independent relationships

- Multiple teams need to own and modify different entities separately

- You need strict referential integrity enforced at the database level

- Your access patterns vary wildly and unpredictably

MongoDB shines when:

- Related data is accessed together

- Your application thinks in documents/objects

- Read performance matters more than write complexity

- Your schema needs to evolve quickly

The mistake isn't choosing MongoDB. The mistake is choosing MongoDB and then using it like SQL.

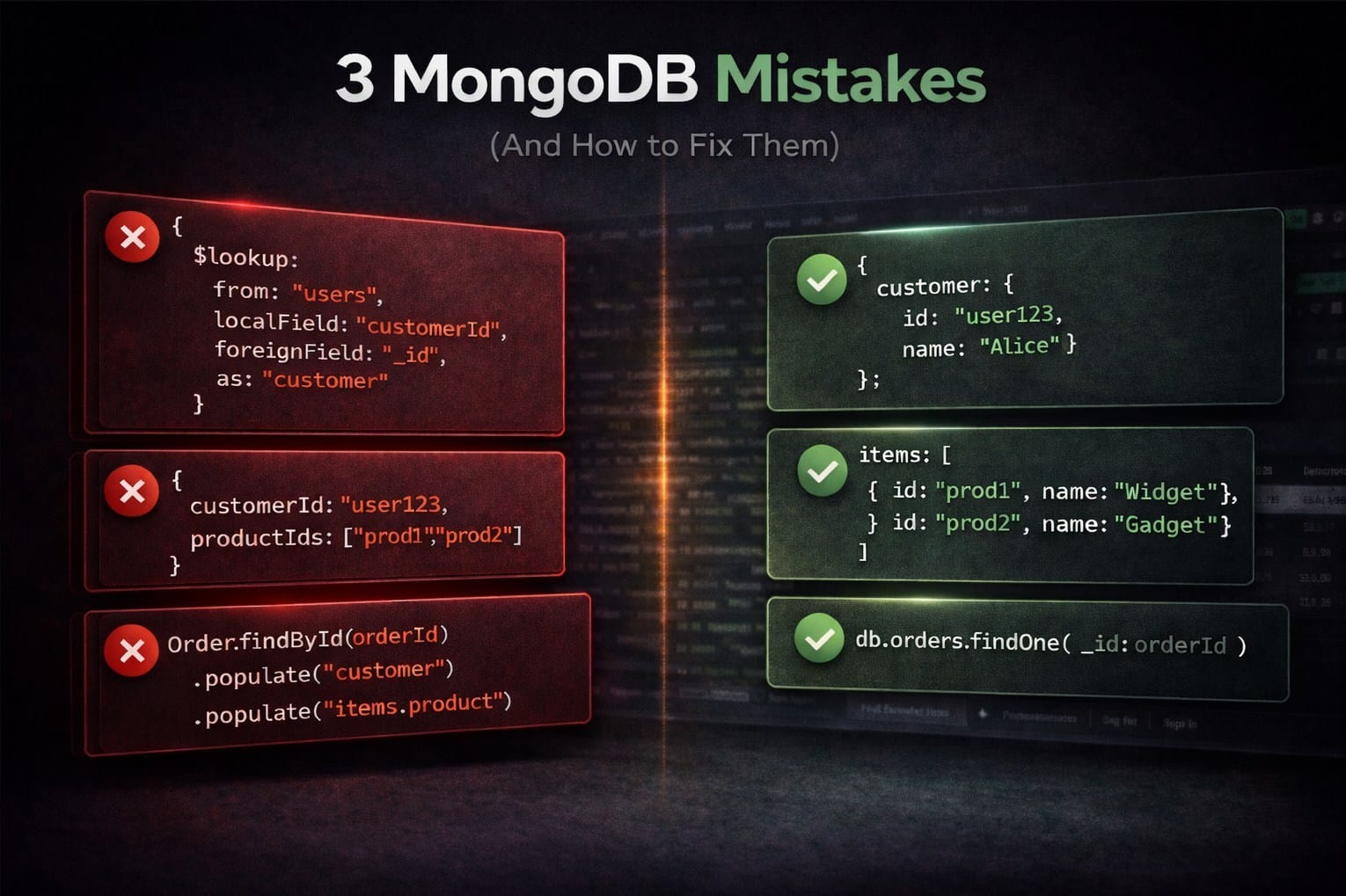

Mistake #1: Designing MongoDB Like It's SQL

I've seen projects where developers designed their MongoDB schema exactly how they would design SQL—creating separate collections for users, orders, order_items, products, categories. Perfectly normalized. And perfectly slow.

They changed their query syntax from SQL to MongoDB but kept thinking in rows and foreign keys. The database changed, but they didn't utilize MongoDB's main advantages.

The Golden Rule: Data that is accessed together should be stored together.

Mistake #2: The "Reference Everything" Reflex

SQL trained us that duplication is evil. Normalize. Reference. Never store the same data twice.

This instinct creates MongoDB code like:

// Order document

{

_id: ObjectId('order123'),

customerId: ObjectId('customer456'), // Just a reference

productIds: [ObjectId('prod1'), ObjectId('prod2')], // More references

shippingAddressId: ObjectId('addr789') // Yet another reference

}

To display this order, you need four queries. Or an aggregation with three $lookup stages. Either way, you're doing at runtime what could have been done at write time.

Why this happens: MongoDB does not automatically optimize cross collection joins the way relational databases optimize joins. Each $lookup is executed as part of the aggregation pipeline and can add additional processing per stage. This cost is paid on every query that uses $lookup, and in many applications reads significantly outnumber writes.

Think Instead: What's the Read/Write Ratio?

In MongoDB, duplicating data is often a valid performance strategy rather than a mistake.

- Purpose: Duplication eliminates the need for lookups (joins) during read operations, significantly increasing speed.

- Trade-off: You gain faster reads at the cost of more complex writes, as you must update the data in multiple places. This is most effective when data is read frequently but updated rarely.

If you write an order once and read it a hundred times, optimize for reads:

{

_id: ObjectId('order123'),

customer: {

_id: ObjectId('customer456'),

name: 'Alice Smith',

email: 'alice@test.com'

},

items: [

{ productId: ObjectId('prod1'), name: 'Blue Widget', price: 29.99, qty: 2 },

{ productId: ObjectId('prod2'), name: 'Red Gadget', price: 49.99, qty: 1 }

],

shippingAddress: {

street: '123 Main St',

city: 'Seattle',

state: 'WA',

zip: '98101'

},

total: 109.97

}

One query. Zero lookups. Done.

Know the Limits of Embedding

A word of caution: MongoDB documents have a 16MB size limit. This means you can't embed unbounded data—don't try to store millions of log entries inside a single user document, or embed an unlimited comment history in a blog post.

The rule of thumb: embed data that's bounded and accessed together. If an array could grow indefinitely, consider referencing instead, or use the bucket pattern to split large datasets into manageable chunks.

Mistake #3: Putting an ORM Between You and the Database

A developer joins a MongoDB project. They google "MongoDB Node.js" and find Mongoose. They install it. They define schemas. They use .populate(). At least that's how I was taught, and I'm sure countless other developers learned the same way.

// Mongoose "makes MongoDB easy"

const order = await Order.findById(orderId)

.populate('customer')

.populate('items.product')

.populate('shippingAddress');

That single line of code executes 4+ database queries. The developer thinks they wrote one query. The database sees four. When there are 50 items, it's 52 queries—the classic N+1 problem.

The Case For and Against ORMs

To be fair, Mongoose has legitimate use cases. It's great for rapid prototyping, provides solid TypeScript support, and its middleware hooks can simplify authentication and validation logic. For small projects or teams new to MongoDB, the guardrails can be helpful.

But here's the problem: Mongoose teaches SQL patterns with MongoDB syntax. It wraps documents in heavy objects. It hides what's actually happening. And .populate() makes it too easy to accidentally create performance nightmares without realizing it.

Think Instead: Understand Your Queries

Whether you use an ORM or not, you need to understand what your queries actually do. MongoDB has native schema validation that's often stricter than what most Mongoose projects implement:

db.createCollection('orders', {

validator: {

$jsonSchema: {

bsonType: 'object',

required: ['customerId', 'items', 'total', 'status'],

properties: {

total: { bsonType: 'decimal', minimum: 0 },

status: { enum: ['pending', 'processing', 'shipped', 'delivered'] }

}

}

}

});

The database enforces this. No library required.

If you do use Mongoose, use it consciously. Run explain() on your queries. Know when .populate() is triggering multiple round trips.

Conclusion

MongoDB doesn't fail. People fail to adapt to MongoDB.

Every disaster story I've seen follows the same pattern: developers treat MongoDB like SQL with different syntax. They normalize everything. They reference instead of embed.

The fix is simple:

- Embed data that's accessed together. Stop making the database reassemble documents that should never have been split apart.

- Accept strategic duplication. Reads vastly outnumber writes in most applications. Optimize accordingly—but respect the 16MB document limit.

- Understand your queries. Whether you use an ORM or native drivers, know what your queries actually do.

And remember: the question was never "SQL vs MongoDB." The question is "what does my data look like, and how do I access it?"

If your data lives in separate tables and requires complex joins across independent entities, SQL's relational model is built for that. If your data belongs together and is always accessed as a single unit, MongoDB's document model removes unnecessary work.

Design your database in the way that complements your data.

Visualeaf is a modern MongoDB GUI designed for developers who want to understand their data. Visualize document structures, build aggregation pipelines, and debug queries—all without leaving your workflow.