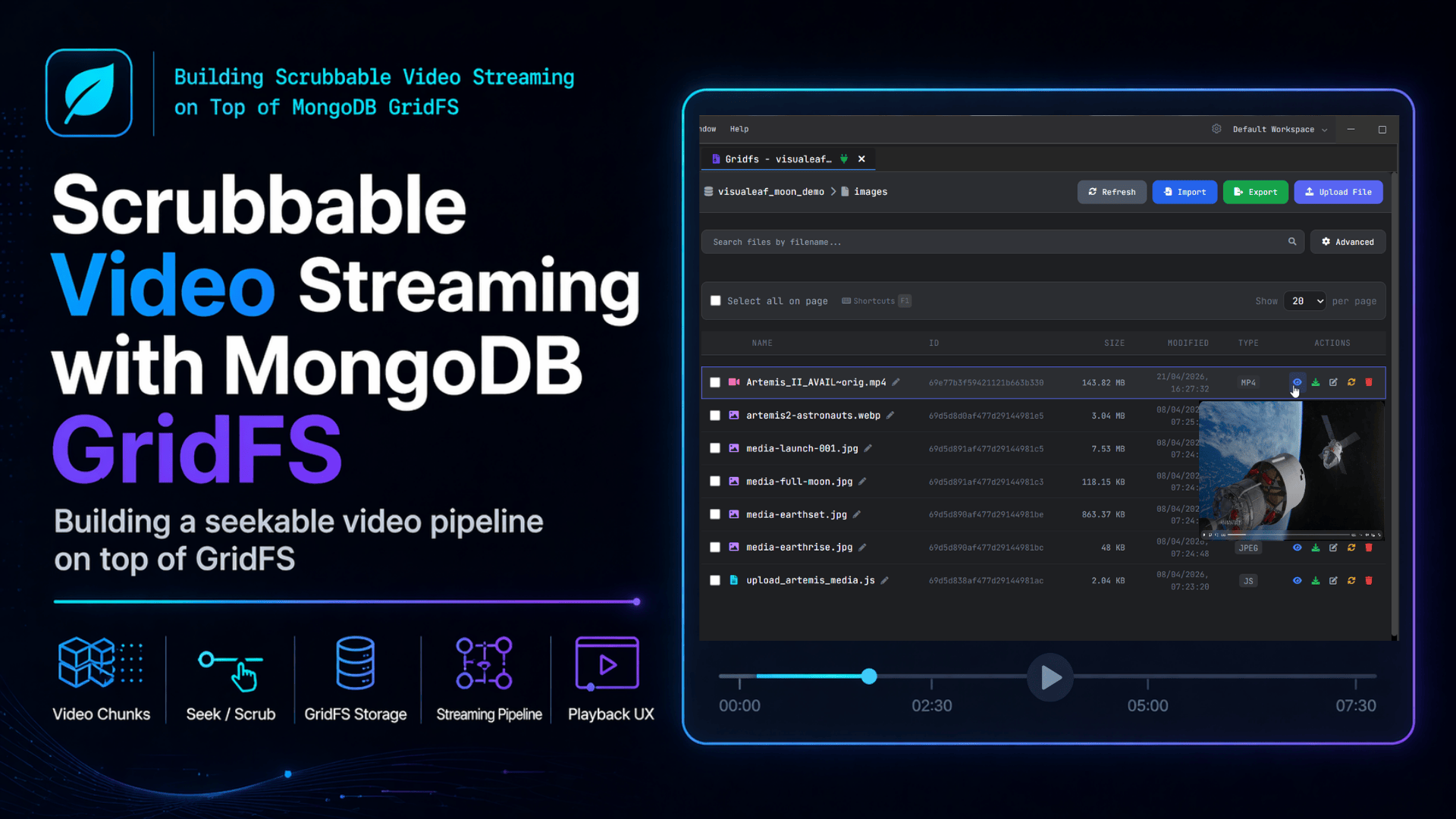

Building Scrubbable Video Streaming on Top of MongoDB GridFS

How I made video preview work in our MongoDB tool — and learned about an HTTP status code I'd somehow never heard of in 5 years of writing backends.

The Problem

When I was working on my desktop client for MongoDB. One of the views inside it is a GridFS browser. Basically, you connect to a database, pick a bucket, and you get a little file-explorer thing for whatever binary blobs happen to live in there. Most of the time, people store screenshots and PDFs. Sometimes videos.

The first version of the video preview that I shipped was super basic. I literally just made a download endpoint and pointed an HTML5 <video> tag at it. It worked fine when I tested it with a tiny 4MB clip on my laptop, so I shipped it and moved on.

Then someone opened an actual video file in it (around 600MB, a screen recording, I think), and the whole thing kind of fell apart. The player would just sit there buffering for ages before showing any frames at all. And then if you tried to drag the scrub bar to the middle of the video, nothing would happen for like 20 seconds, and then it would start playing from there. So you could pretty obviously tell that even when the user just wanted the middle of the video, the backend was sending the entire file from the beginning every single time. Not great.

An HTTP Status Code I Had Never Heard Of

OK, so embarrassing admission time. I knew HTTP responses had codes, and I knew the general buckets (200s mean good, 300s mean redirect, 400s mean you did something wrong, 500s mean I did something wrong). But I had genuinely never, ever heard of 206 Partial Content. I thought responses were either "here is the thing you asked for" or "here is an error". I had no clue that HTTP has an entire protocol built in for "here is part of the thing, you can ask me for the rest later".

Apparently, this has been in HTTP since like 1999?? And every video player on the internet uses it. I felt like I'd been gatekept from a secret. Anyway, here is how it works, in case you also missed it:

-

The browser sends a request with a header that looks like

Range: bytes=1048576-2097151. That basically just means "give me the bytes from offset 1048576 up to offset 2097151" (so, that 1MB slice). -

The server is supposed to reply with status

206instead of the usual 200, plus a header calledContent-Range: bytes 1048576-2097151/618475520, and only the bytes for that slice in the body. The number after the slash is the total file size, which the player needs so it knows how long to draw the timeline. -

Then the player just stitches all the slices together as the user plays and seeks. It will issue a LOT of these per video. Like, a lot. They come in whatever order the player feels like.

There's also a small thing I missed for like a whole evening: even if you're responding with the full file (because the request didn't have a Range header), you still need to add a header called Accept-Ranges: bytes. Otherwise, the browser looks at your response, decides your server can't seek, and the scrub bar in the player just sits there dead. I was sending correct 206 responses on the second request, but the first request didn't advertise range support, so the player never tried. Took me forever to figure that out.

The Spec References

Since I had to look all this stuff up while writing the post, I figured I'd just dump the references here:

-

Range units. The only unit servers are required to support is

bytes. See RFC 9110 §14.1. -

Range syntax. A request can ask for one range (

bytes=0-1023), an open-ended one (bytes=500-), a suffix (bytes=-1024for "the last 1024 bytes"), or even multiple ranges in one request. See RFC 9110 §14.1.2. -

The 206 response itself. Defined in RFC 9110 §15.3.7. It must include either a

Content-Rangeheader (single range) or aContent-Type: multipart/byterangesbody (multiple ranges). -

Range satisfiability. If the requested range is outside the file, reply

416 Range Not Satisfiable. See RFC 9110 §15.5.17. I clamp my range inputs instead of returning 416 because video players occasionally send slightly-past-the-end ranges. -

History. Range requests were originally in RFC 7233 (2014). RFC 9110 (2022) folded everything back into one document. The MDN page for 206 is also good if RFC prose makes your eyes glaze over.

GridFS Is Actually Really Cool

OK, I want to spend a minute on this part because, going into the project, I kind of assumed GridFS would be the boring, annoying part of the whole thing. Like, MongoDB is a document database; it's not really a "store your files" database, so GridFS always felt to me like a hack on top of a thing that wasn't built for it. But after actually building this feature, I'm honestly a fan now.

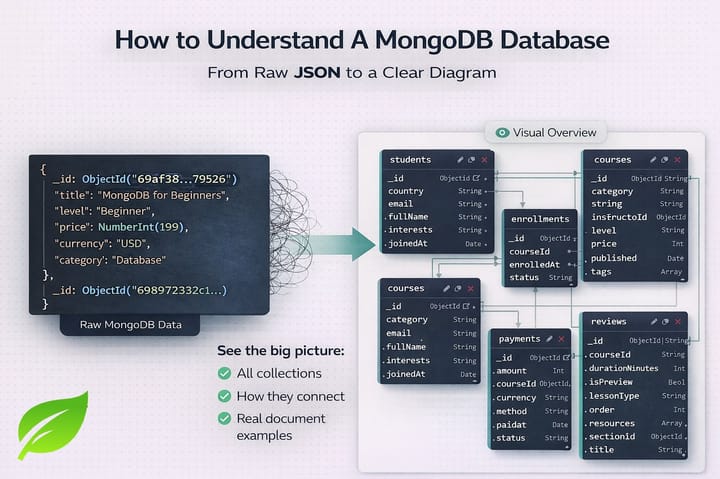

Quick GridFS 101

GridFS exists because MongoDB documents have a maximum size of 16MB. So if you want to store something bigger than that in MongoDB (a video, a big PDF, whatever), you can't just stick it in a document. GridFS is the convention MongoDB came up with: when you upload a file, the driver chops it into fixed-size chunks (255KB by default) and stores them across two collections.

-

<bucket>.fileshas one document per file. That document holds the metadata: the filename, the total length, the chunk size, the content type, and whatever else you want to attach. -

<bucket>.chunkshas one document per chunk. Each chunk document has afiles_idpointing back to the file, an ordinal number calledn(so 0, 1, 2, 3…), and a binarydatafield with the actual bytes.

Why This Layout Is Perfect for Streaming

Here's the part that I think is genuinely awesome: this layout is basically perfect for streaming. Like, if you sat down and said "I want to design a file storage system specifically for serving byte ranges efficiently", you would probably end up with something that looks A LOT like this. Every file is already sitting on disk pre-cut into small, individually addressable, indexed pieces. You don't have to encode anything special, you don't need a manifest file, you don't need to copy your files to a separate "streaming server". The chunks are just sitting right there, waiting for you to ask MongoDB for them by number.

The thing I find kind of mind-blowing is that the chunks aren't actually there for streaming reasons at all. They're there because of the 16MB document limit. But the side effect is that you basically get a free random-access primitive that lets you do stuff that would otherwise need a completely separate system (like an HLS streaming server, or a CDN with byte-range support). For free. Just because of how the storage layout happens to work.

The catch (and this is where I went wrong for a while) is that the MongoDB driver hides all of this from you. The GridFSBucket.openDownloadStream(id) returns an InputStream that pretends GridFS is just a normal sequential file. So if you only ever use the convenience API, you'd never even realize the chunks are there.

My First Attempt (Which Did Not Work)

My first attempt at supporting range requests was the totally obvious one. I figured: I have an InputStream, I'll just call skip(start) on it to fast-forward to where the player wants to start, and then copy end - start + 1 bytes into the response. Took me maybe 15 minutes.

The bytes were correct. The HTTP responses validated. The video played. And it was still really, really slow. Like, seeking to byte 500MB of a 1GB video took roughly the same amount of time as just downloading the entire 1GB.

What skip() Actually Does

InputStream.skip() on a GridFS download stream is not a seek. Under the hood, it walks chunks sequentially from n=0, reads each one, and throws them away one by one until it has burned through the number of bytes you wanted to skip. So if the browser asks for byte 500,000,000, the server happily reads HALF A GIGABYTE of chunks out of MongoDB and just throws them in the trash. And then when the player asks for the next slice, it does the whole thing all over again from byte 0.

In hindsight, this is even kind of documented. The Java InputStream docs say skip() is allowed to actually read the bytes if it has to. The real mistake on my part was wrapping a perfectly random-access storage layout (the chunks!) in a sequential abstraction (the InputStream) and then expecting the abstraction to magically let me jump around.

Talking to the Chunks Directly

Once I stopped trying to use the stream API, the fix was actually pretty small. The chunks are just MongoDB documents. They have an n field that tells you where they sit in the file. The file metadata tells me the chunk size. So if I know the byte range I want, I can do a tiny bit of math to figure out exactly which chunks contain those bytes, and then go ask MongoDB for those specific chunks directly.

val gridFSFile = gridFSBucket.find(Filters.eq("_id", objectId)).firstOrNull()

?: throw IllegalArgumentException("File not found")

val fileLength = gridFSFile.length

val chunkSize = gridFSFile.chunkSize // 261120 bytes by default

val actualStart = startByte.coerceIn(0, fileLength - 1)

val actualEnd = endByte.coerceIn(actualStart, fileLength - 1)

// Translate byte range to chunk range.

val startChunk = (actualStart / chunkSize).toInt()

val endChunk = (actualEnd / chunkSize).toInt()

// Remember the partial chunk offsets at each end so we can trim later.

val offsetInStartChunk = (actualStart % chunkSize).toInt()

val bytesNeededFromEndChunk = ((actualEnd % chunkSize) + 1).toInt()

// Hit the chunks collection directly. No download stream, no skip.

val chunksCollection = db.getCollection("${collectionName}.chunks")

val chunksCursor = chunksCollection.find(

Filters.and(

Filters.eq("files_id", objectId),

Filters.gte("n", startChunk),

Filters.lte("n", endChunk)

)

).sort(Sorts.ascending("n"))

The part that made me really happy: GridFS already creates a compound index on { files_id: 1, n: 1 } automatically when it writes a file. Which means this query is a tight, indexed range scan that returns exactly the chunks the request actually needs. Nothing extra. No bytes get pulled out of MongoDB just to be thrown away.

A 1 GB video, 255 KB chunks, user seeks to byte ~500 MB

openDownloadStream + skip()

┌─────────────────────────────────────────────────────────────┐

│ ░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░ ████████████ │

└─────────────────────────────────────────────────────────────┘

↑ ~2,000 chunks pulled from Mongo and discarded ↑ chunks the

player wants

Direct chunk query (n >= startChunk AND n <= endChunk)

┌─────────────────────────────────────────────────────────────┐

│ ████████████ │

└─────────────────────────────────────────────────────────────┘

↑ exactly the

range needed

Trimming the Edges

Just querying the right chunks isn't quite enough. The first chunk in the cursor almost always has some bytes BEFORE the byte the player asked for (because the start byte is usually somewhere in the middle of a chunk), and the last chunk has some bytes AFTER the requested end. So I wrote a small InputStream class that wraps the cursor and trims the bytes as it goes:

private class GridFSChunkRangeInputStream(

private val chunksCursor: Iterator<Document>,

private val startChunk: Int,

private val endChunk: Int,

private val offsetInStartChunk: Int,

private val bytesNeededFromEndChunk: Int,

private val chunkSize: Int,

private val totalBytesToRead: Long

) : InputStream() {

private var currentChunkData: ByteArray? = null

private var positionInCurrentChunk = 0

private var totalBytesRead = 0L

override fun read(b: ByteArray, off: Int, len: Int): Int {

if (totalBytesRead >= totalBytesToRead) return -1

var bytesReadTotal = 0

var currentOff = off

var remainingLen = minOf(len.toLong(), totalBytesToRead - totalBytesRead).toInt()

while (remainingLen > 0) {

if (currentChunkData == null ||

positionInCurrentChunk >= currentChunkData!!.size) {

if (!loadNextChunk()) break

}

val available = currentChunkData!!.size - positionInCurrentChunk

val toCopy = minOf(available, remainingLen)

System.arraycopy(currentChunkData!!, positionInCurrentChunk,

b, currentOff, toCopy)

positionInCurrentChunk += toCopy

currentOff += toCopy

remainingLen -= toCopy

bytesReadTotal += toCopy

totalBytesRead += toCopy

}

return if (bytesReadTotal == 0) -1 else bytesReadTotal

}

}

The interesting bit is the loadNextChunk() method. Depending on which chunk it's looking at, it has to do different things:

currentChunkData = when {

// Single-chunk range: trim both ends inside one chunk.

currentChunkNumber == startChunk && currentChunkNumber == endChunk -> {

val endOffset = minOf(offsetInStartChunk + totalBytesToRead.toInt(),

fullChunkData.size)

fullChunkData.copyOfRange(offsetInStartChunk, endOffset)

}

// First chunk: drop the prefix before the requested start.

currentChunkNumber == startChunk && offsetInStartChunk > 0 ->

fullChunkData.copyOfRange(offsetInStartChunk, fullChunkData.size)

// Last chunk: drop the suffix after the requested end.

currentChunkNumber == endChunk ->

fullChunkData.copyOfRange(0, minOf(bytesNeededFromEndChunk, fullChunkData.size))

// A middle chunk: pass it through as-is.

else -> fullChunkData

}

The Single-Chunk Bug

Quick story about that single-chunk case (the first one in the when block). I got that wrong on my first try. I had it as just "first chunk" + "last chunk" originally, and small range requests broke because of it. Video players issue tiny range requests all the time; when they're first reading a video file's header, for example, they might ask for the first 2KB. Both of my branches were trying to claim that one chunk, and they were producing nonsense bytes.

So yeah, definitely add the explicit "the start AND the end are in the same chunk" case if you ever try to build something like this.

The Bulk Read Optimization

Another thing I got wrong: I only implemented the single-byte read() method, not the bulk read(b, off, len) overload. The video played, but it was bizarrely slow. Apparently, Spring's InputStreamResource always tries to call the bulk version, and the bulk version can use System.arraycopy to move whole kilobytes at a time instead of doing a virtual method call per byte. After I added the bulk overload, streaming was something like 30x faster. Cheap fix, huge win.

The Broken Pipe Panic

One last thing that absolutely nobody warned me about. A working video player cancels in-flight requests CONSTANTLY. Every time the user drags the scrub bar even a tiny bit, the browser kills any range requests it doesn't need anymore. On the server side, this shows up as IOException: Broken pipe while you're trying to write the response.

The first time I saw a wall of these in my logs, I genuinely thought I had broken something really badly. But I had not broken anything. It is just the most normal thing a video endpoint experiences. The fix is to just catch the IO exception and return a quiet 204:

} catch (e: java.io.IOException) {

// Client disconnected mid-stream. This is normal during seeking.

ResponseEntity.status(HttpStatus.NO_CONTENT).build()

}

My new rule of thumb: if a video endpoint's logs are super quiet, the endpoint is probably broken. They should be full of cancellations, and you should ignore them.

Where Things Ended Up

Opening a 2GB video file in the app now shows the first frame almost immediately. The player makes a small range request for the file header (usually a few hundred KB at most), the server resolves it to one or two chunks, and that's the whole network conversation before playback starts. Dragging the scrub bar to anywhere in the file feels basically instant; it's bounded by network round-trip time now, not by where in the file you dragged. And memory on the server stayed flat the whole time, because each request only ever holds one chunk in flight.

The whole thing turned out to be maybe 80 lines of Kotlin, including the custom InputStream class. Honestly, the hard part wasn't writing the code. The hard part was figuring out that I had to write it in the first place, instead of just trusting the helper that the driver gave me.

But the bigger takeaway for me wasn't really about the code. It turns out GridFS is way more interesting than I gave it credit for. People talk about it like it's the boring corner of MongoDB where blobs go to die. But the chunked layout it uses is genuinely a really nice substrate for streaming. Once you stop thinking of chunks as a hidden implementation detail and start thinking of them as the addressable unit they actually are, a bunch of stuff that I would've assumed needed a separate media server turns out to be one indexed MongoDB query away.

Further Reading

HTTP Range Requests and 206

- RFC 9110 §14 — Range Requests — The current canonical spec

- RFC 9110 §15.3.7 — 206 Partial Content

- MDN: HTTP range requests — Plain-English overview

- MDN: 206 Partial Content

HTML5 Video Element

- WHATWG HTML spec — Media elements

- MDN: Media container formats — Why some containers stream better than others

MongoDB GridFS

- MongoDB docs — GridFS

- GridFS specification — The cross-driver spec

- MongoDB Java/Kotlin driver docs

- The 16MB BSON document limit

Streaming on the JVM and Spring

Adjacent Things Worth Knowing

- RFC 8216 — HTTP Live Streaming (HLS) — The "real" answer for video at scale

- ffmpeg

-movflags +faststart— Makes MP4 files start playing instantly - nginx sendfile and byte-range handling — If your bytes live on a filesystem

Visualeaf is a desktop client for MongoDB. The GridFS browser lets you preview videos, images, and documents stored in any bucket — with instant seeking powered by the technique described in this post.

Comments ()